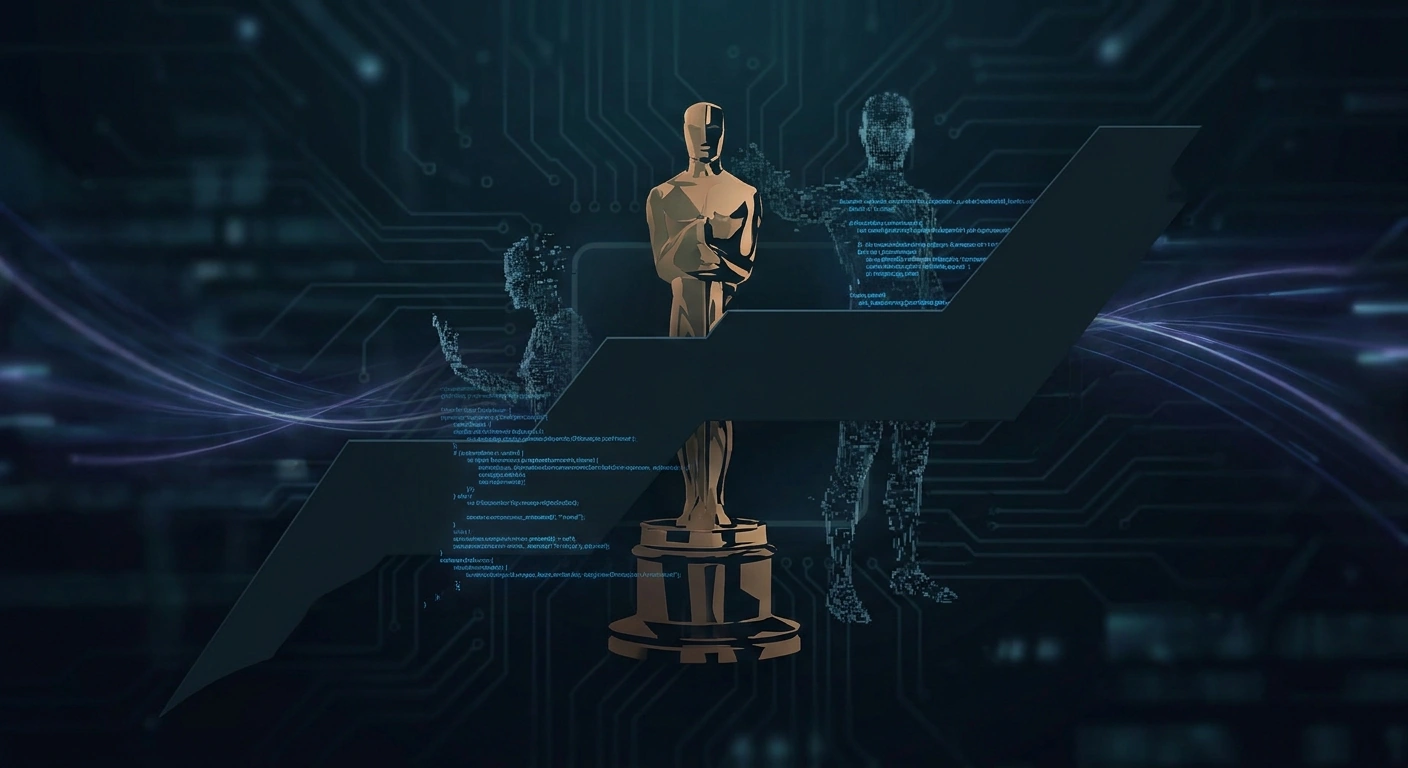

Oscars Bar AI-Generated Actors and Scripts from Eligibility

The Academy of Motion Picture Arts and Sciences has ruled that films using AI-generated actors or scripts are ineligible for Oscar consideration, marking a significant policy stance on synthetic media in Hollywood.

In a landmark policy decision that could reshape Hollywood's relationship with generative AI, the Academy of Motion Picture Arts and Sciences has declared that films featuring AI-generated actors or scripts will no longer be eligible for Oscar consideration. The ruling represents one of the most consequential institutional stances yet against synthetic media in mainstream filmmaking.

What the Policy Covers

The Academy's new guidelines specifically target two categories of generative AI use: fully synthetic performers—digital actors created through text-to-video models, neural rendering, or generative face synthesis—and scripts authored primarily by large language models without substantive human authorship. Films relying on these technologies as core creative components will be deemed ineligible across all award categories.

Notably, the policy does not appear to ban all AI use. Tools used for visual effects enhancement, de-aging, dubbing, upscaling, or post-production cleanup remain permissible—reflecting the reality that machine learning is now embedded throughout the modern VFX pipeline. The distinction the Academy is drawing centers on creative authorship rather than technical assistance.

Why This Matters Technically

The ruling lands at a moment when generative video models have crossed crucial quality thresholds. Systems like OpenAI's Sora, Runway Gen-3, Google's Veo, and emerging open-source models can now produce photorealistic short-form footage with consistent characters, plausible physics, and controllable cinematography. Voice cloning tools from ElevenLabs and others can replicate performers with minimal training data, while LLMs are increasingly capable of generating feature-length screenplay drafts.

That convergence had begun pulling synthetic actors and AI-generated narrative content into serious production discussions. The recently announced "Tilly Norwood," an AI-generated actress being shopped to talent agencies, sparked industry backlash and likely accelerated the Academy's response.

Detection and Enforcement Challenges

The policy raises immediate practical questions about enforcement. How will the Academy verify that a script wasn't substantially LLM-generated? How will it detect whether a supporting performer was synthesized rather than filmed? Current AI detection tools remain unreliable, particularly for hybrid workflows where human-written prose is iteratively refined with ChatGPT or Claude, or where live-action footage is augmented with generative inpainting.

Industry observers expect the Academy to rely heavily on self-disclosure requirements, similar to how it currently handles eligibility documentation. Studios will likely need to certify the human authorship of submitted works, with penalties for misrepresentation. C2PA content credentials and provenance metadata could play a growing role in documenting which assets in a production pipeline were human-created versus machine-generated.

Strategic Implications for the AI Video Industry

For companies building generative video tools—Runway, Pika, Luma, Stability AI, and others—the ruling creates a complex market signal. Hollywood's most prestigious awards body has effectively drawn a line between AI as a tool versus AI as an author. This framing could influence how these vendors position their products: emphasizing collaborative VFX and pre-visualization features rather than full synthetic generation.

Meanwhile, the policy is likely to intensify demand for provenance and authenticity verification infrastructure. Tools that can certify human authorship, track AI involvement throughout a production, and produce auditable creation logs will become increasingly valuable not just for Oscar eligibility but for guild compliance, insurance underwriting, and union contracts negotiated after the 2023 WGA and SAG-AFTRA strikes.

Broader Industry Ripple Effects

Other awards bodies—BAFTA, the Emmys, Cannes, and major guilds—will face pressure to articulate their own positions. Streaming platforms commissioning original content may adopt similar restrictions to maintain awards eligibility, effectively extending the Academy's policy throughout premium production budgets.

The ruling also signals where the cultural debate over synthetic media is heading. While generative AI will continue to dominate lower-budget content, social video, and advertising, the highest tiers of dramatic filmmaking are coalescing around a human-authorship standard. Whether that line holds as AI capabilities improve—or whether enforcement proves untenable—will be one of the defining questions for the synthetic media industry over the next several years.

For deepfake detection researchers and authenticity infrastructure providers, the Academy's stance is a clear validation: as generative quality rises, institutional demand for verifiable human provenance is rising with it.

Stay informed on AI video and digital authenticity. Follow Skrew AI News.