LLM Research

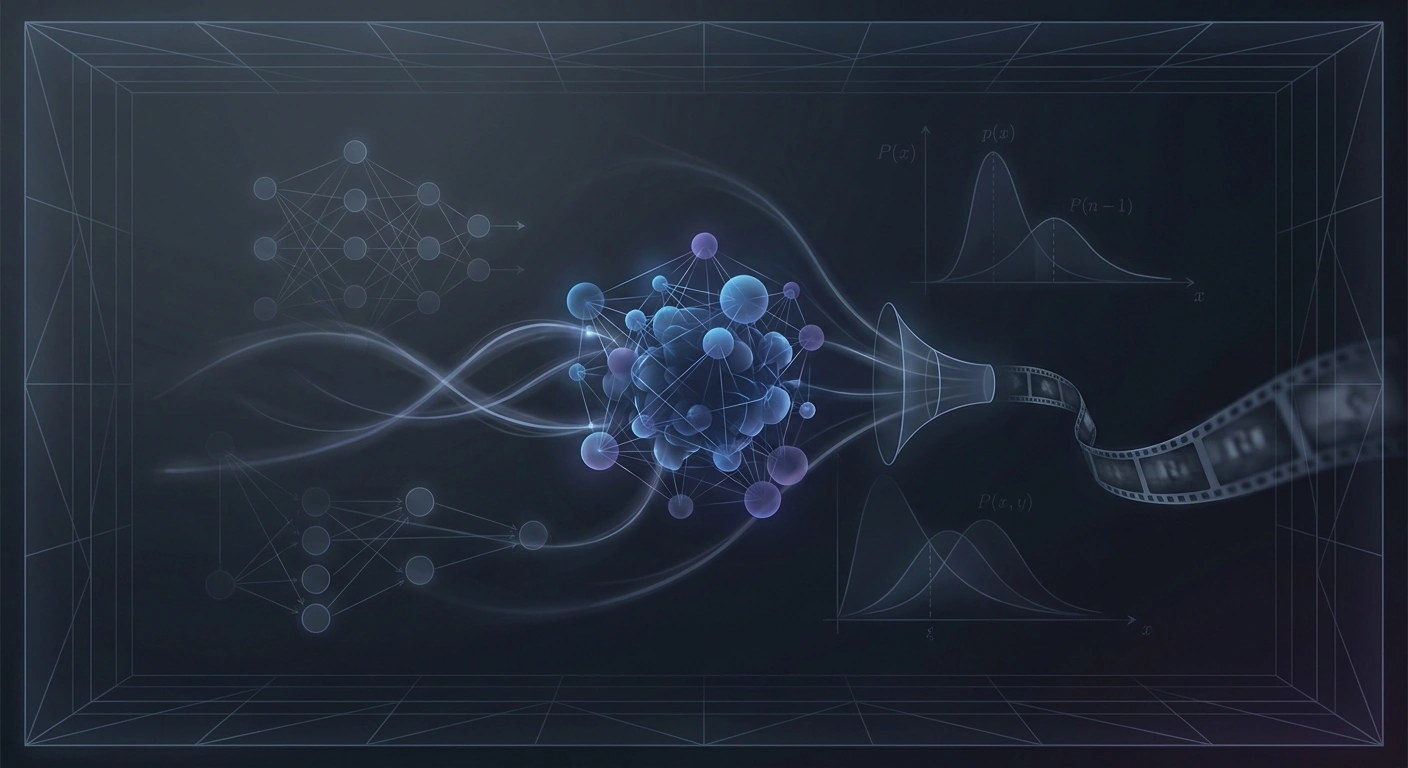

LLM Training as Lossy Compression: What Models Forget

New research reframes LLM training as lossy compression, revealing that learning is fundamentally about strategic forgetting. The information-theoretic framework explains how models selectively discard data while retaining generalizable patterns.