SkillNet Framework Enables Modular AI Skill Networks

New research introduces SkillNet, a framework for creating, evaluating, and connecting modular AI skills that can be composed into complex agent capabilities.

A new research paper titled "SkillNet: Create, Evaluate, and Connect AI Skills" introduces a comprehensive framework for developing modular, composable AI capabilities. The work addresses one of the fundamental challenges in building sophisticated AI agents: how to create discrete, reusable skills that can be combined to accomplish complex tasks.

The Modular Skill Problem

As AI systems evolve from single-purpose models to general-purpose agents, researchers face a critical architectural question: should AI capabilities be monolithic or modular? The SkillNet framework firmly advocates for the latter approach, proposing a structured methodology for breaking down AI capabilities into discrete, testable, and connectable skills.

Traditional large language models and AI systems typically operate as black boxes, with capabilities emerging from massive training processes. While effective, this approach creates challenges for evaluation, debugging, and targeted improvement. SkillNet addresses these limitations by treating AI capabilities as a network of interconnected skills, each with clear interfaces and evaluation criteria.

The Three Pillars: Create, Evaluate, Connect

The framework is built around three core operations that form a complete lifecycle for AI skill development:

Skill Creation

SkillNet provides structured approaches for defining and implementing individual AI skills. Rather than relying on emergent capabilities from general training, the framework encourages explicit skill definition with clear input specifications, expected outputs, and behavioral constraints. This approach mirrors software engineering best practices, treating AI capabilities as first-class components that can be developed, versioned, and maintained independently.

Skill Evaluation

A critical innovation in SkillNet is its approach to skill assessment. The framework provides evaluation mechanisms that go beyond simple accuracy metrics, considering factors like reliability, consistency, and edge case handling. This granular evaluation enables developers to identify specific capability gaps rather than debugging opaque system-wide failures.

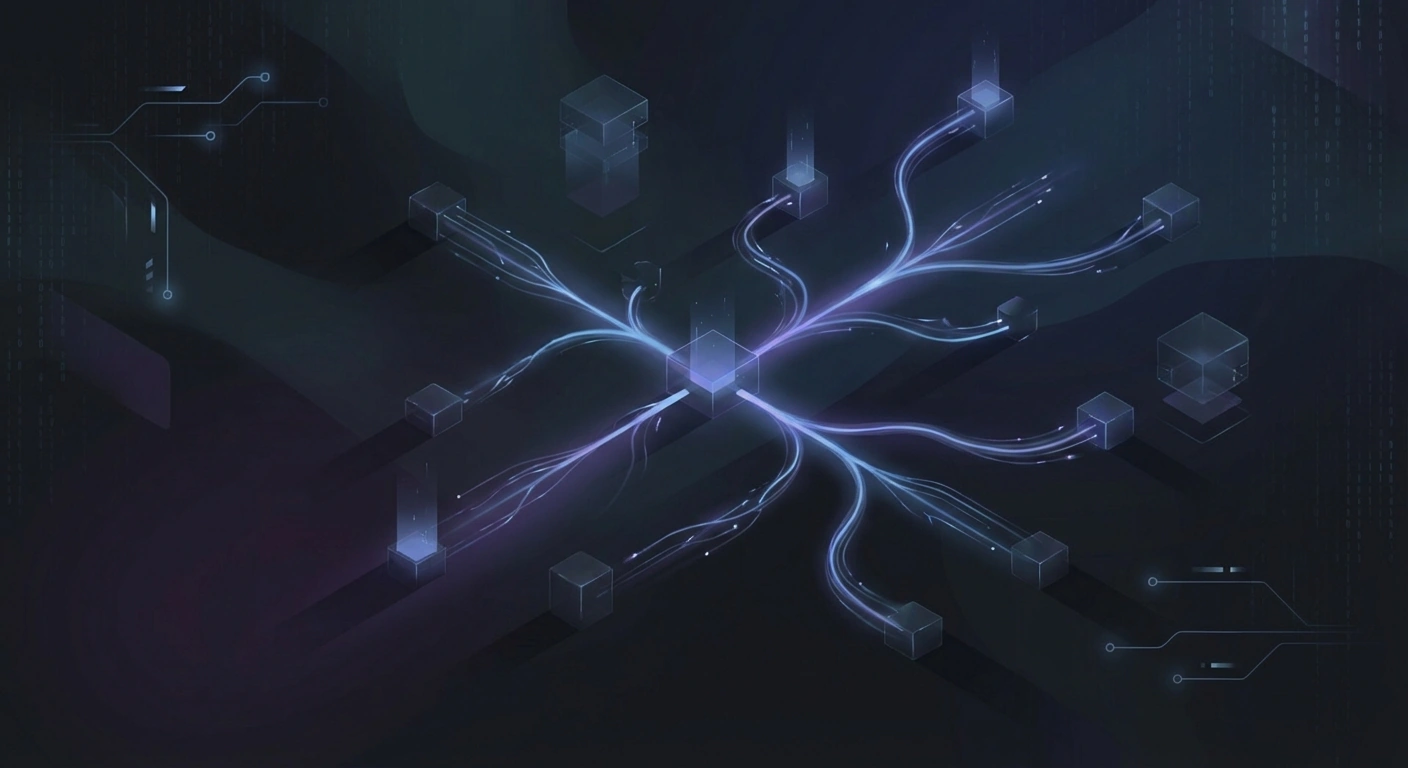

Skill Connection

Perhaps most importantly, SkillNet defines protocols for connecting skills into larger capability networks. Skills can be composed sequentially, in parallel, or in complex dependency graphs. The framework handles skill orchestration, data flow between components, and error propagation—challenges that typically plague multi-step AI agent implementations.

Implications for AI Agent Development

The SkillNet approach has significant implications for the broader AI agent ecosystem. As organizations deploy increasingly sophisticated AI systems, the ability to audit, test, and improve specific capabilities becomes crucial. A modular skill architecture enables:

Targeted Fine-tuning: Rather than retraining entire models, developers can improve specific skills while maintaining stability in other areas. This reduces computational costs and minimizes regression risks.

Capability Auditing: With discrete skills, organizations can more easily verify that AI systems meet specific requirements, whether for regulatory compliance, safety constraints, or performance standards.

Skill Marketplaces: The standardized skill interface opens possibilities for skill sharing and reuse across organizations, potentially accelerating AI development through collaborative skill libraries.

Connection to Memory and Agent Architectures

SkillNet's modular approach complements recent advances in AI agent memory systems. Frameworks like AriadneMem and PlugMem, which provide hierarchical and task-agnostic memory modules for LLM agents, could potentially integrate with SkillNet to create agents with both persistent memory and modular skills.

The combination of sophisticated memory architectures with composable skill networks points toward a future where AI agents are assembled from verified, tested components rather than trained as monolithic systems. This architectural shift could dramatically improve the reliability and trustworthiness of deployed AI systems.

Technical Considerations

Implementing skill-based architectures introduces overhead that monolithic approaches avoid. Skill boundaries require explicit data serialization, error handling, and orchestration logic. The framework must balance modularity benefits against performance costs, particularly for latency-sensitive applications.

Additionally, skill decomposition is not always straightforward. Some AI capabilities emerge from complex interactions that resist clean factorization. The SkillNet approach works best when skills have clear boundaries and well-defined interfaces—a constraint that may not suit all applications.

Looking Forward

As AI systems become more capable and more widely deployed, the need for systematic approaches to capability development, evaluation, and composition will only grow. SkillNet represents a significant step toward treating AI development with the same rigor applied to traditional software engineering.

For organizations building AI agents for content generation, media analysis, or authenticity verification, modular skill architectures offer compelling advantages. The ability to test detection skills independently, compose generation capabilities safely, and audit system behavior systematically addresses many concerns surrounding AI deployment in sensitive domains.

Stay informed on AI video and digital authenticity. Follow Skrew AI News.