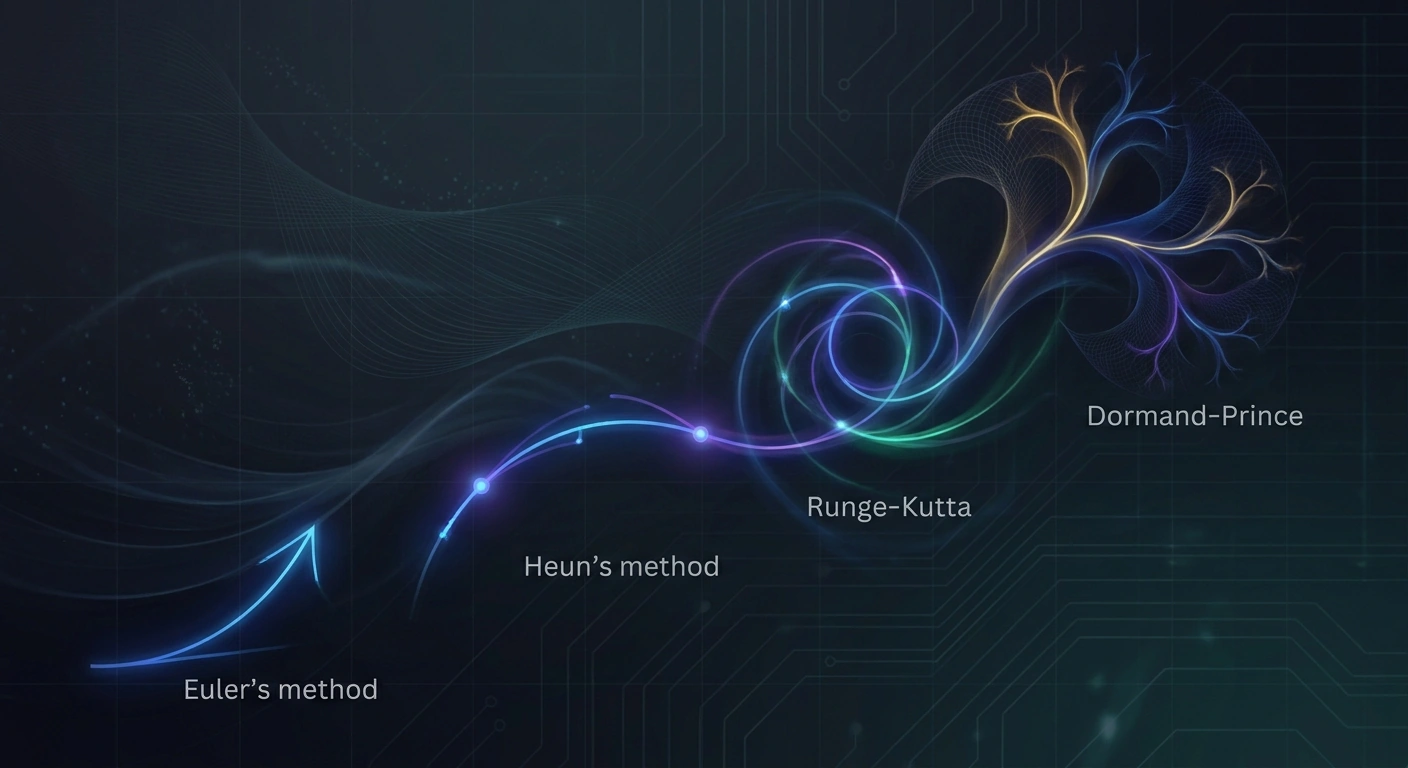

ODE Solvers for Flow Matching: Euler to Dormand-Prince

A technical deep dive into ODE solvers powering flow matching generative models, comparing Euler, Heun, Runge-Kutta, and adaptive Dormand-Prince methods that determine sample quality in modern video and image synthesis.

Flow matching has emerged as one of the most important paradigms in modern generative AI, powering state-of-the-art video and image synthesis systems including Stable Diffusion 3, Meta's Movie Gen, and many recent text-to-video models. At the heart of every flow matching system lies a numerical integration problem: solving an ordinary differential equation (ODE) that transforms noise into structured samples. The choice of ODE solver dramatically affects both generation quality and computational cost.

What Flow Matching Actually Computes

Flow matching trains a neural network to predict a velocity field that continuously transports samples from a simple prior distribution (typically Gaussian noise) to the target data distribution. At inference time, generation requires integrating this velocity field along a trajectory — solving an ODE of the form dx/dt = v_θ(x, t). The integration starts at t=0 with random noise and ends at t=1 with a generated sample. Every numerical step calls the neural network, so solver efficiency directly translates to inference latency.

Euler: The Baseline

The simplest approach is the explicit Euler method: x_{n+1} = x_n + h · v_θ(x_n, t_n), where h is the step size. Euler is first-order accurate, meaning the local truncation error scales as O(h²) and global error as O(h). For flow matching, Euler often requires 50-100 steps to produce high-quality samples. It's the default in many diffusion and flow matching pipelines because of its simplicity, but it leaves substantial quality on the table when step counts are reduced for faster inference.

Heun and Midpoint: Second-Order Improvements

Heun's method (improved Euler) evaluates the velocity field twice per step: once at the current point and once at a predicted endpoint, then averages them. This achieves second-order accuracy at the cost of doubling neural function evaluations (NFEs). For flow matching models, Heun typically matches Euler quality at roughly half the steps, making total compute similar but often producing smoother trajectories. The midpoint method offers similar second-order accuracy by evaluating velocity at the trajectory midpoint.

Runge-Kutta 4: The Workhorse

Classical RK4 evaluates the velocity field at four carefully chosen points per step and combines them with weights derived to cancel error terms up to fourth order. Each step costs 4 NFEs but the dramatic reduction in error per step often makes RK4 efficient overall — particularly for difficult sampling regions where the velocity field has high curvature. In video generation, where temporal coherence demands precise integration, RK4 frequently outperforms lower-order methods at matched compute budgets.

Dormand-Prince: Adaptive Step Sizing

The Dormand-Prince (DOPRI5) method is an embedded Runge-Kutta scheme that simultaneously computes a fifth-order solution and a fourth-order estimate. The difference between them provides a local error estimate, which the solver uses to adaptively shrink or grow the step size. When the velocity field is smooth, DOPRI5 takes large steps; when curvature spikes, it automatically refines.

For generative models, this adaptivity is particularly valuable because the velocity field's complexity varies dramatically across the trajectory — typically smooth near the noise endpoint and highly structured near the data endpoint. Adaptive solvers concentrate compute where it matters. The trade-off is implementation complexity and unpredictable runtime, which complicates batch processing on GPUs.

Implications for Video and Image Generation

Solver choice has direct consequences for production AI video systems. A model running with Euler at 50 steps consumes 50 NFEs per generated frame; switching to Heun at 20 steps consumes 40 NFEs while often improving quality. For long-form video generation, where each second may require dozens of frames each requiring tens of NFEs, these differences compound into hours of GPU time.

Recent work on distilled flow matching models (like rectified flow and consistency models) aims to reduce required solver steps to as few as 1-4 by training the network so the trajectory is nearly straight. When trajectories are linear, even Euler is exact. This represents an interesting design dialogue between training-time straightening and inference-time integration sophistication.

Practical Takeaway

For practitioners deploying flow matching generative models, the solver is a critical hyperparameter often left at default. Benchmarking Euler, Heun, RK4, and DOPRI5 across the actual quality-vs-latency Pareto frontier of your specific model can yield substantial improvements — sometimes 2-3x faster inference at matched quality. As AI video generation moves toward real-time applications, mastery of these classical numerical methods becomes increasingly strategic.

Stay informed on AI video and digital authenticity. Follow Skrew AI News.